TL;DR

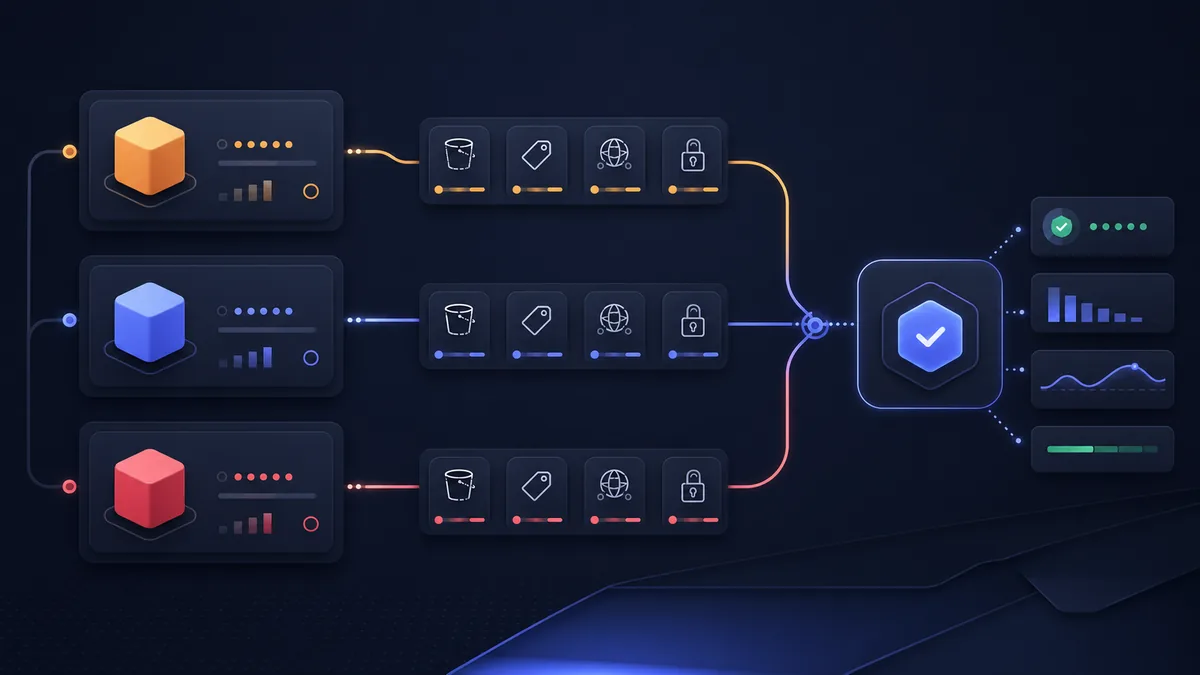

AWS S3 with @aws-sdk/client-s3 is the industry standard — maximum features, best ecosystem, but expensive egress. Cloudflare R2 is S3-compatible with zero egress fees — the most cost-effective choice for high-read workloads (user uploads, media, exports). Backblaze B2 offers the lowest storage prices and is S3-compatible, making it the budget option for large data volumes. In 2026, R2 has emerged as the default choice for most new projects given its S3 compatibility and zero-egress pricing.

Key Takeaways

- AWS S3: Most features, best tooling, but $0.09/GB egress — costs spike under load

- Cloudflare R2: Zero egress fees, S3-compatible API, pairs perfectly with Cloudflare CDN

- Backblaze B2: $0.006/GB storage (cheapest), S3-compatible via B2 Native or S3 API

- All three work with the AWS S3 SDK — R2 and B2 are S3-compatible

- R2 has a generous free tier: 10GB storage + 1M Class A operations/month

- Use the same SDK code for all three — just change the endpoint and credentials

The S3 Compatibility Story

The key insight: you don't need provider-specific SDKs. Cloudflare R2 and Backblaze B2 both expose an S3-compatible API, so @aws-sdk/client-s3 works with all three with minimal configuration changes.

// Same code, different configs:

// AWS S3:

const s3 = new S3Client({ region: "us-east-1" })

// Cloudflare R2:

const r2 = new S3Client({

region: "auto",

endpoint: `https://${process.env.CF_ACCOUNT_ID}.r2.cloudflarestorage.com`,

credentials: {

accessKeyId: process.env.R2_ACCESS_KEY_ID!,

secretAccessKey: process.env.R2_SECRET_ACCESS_KEY!,

},

})

// Backblaze B2:

const b2 = new S3Client({

region: "us-west-002", // B2 region

endpoint: "https://s3.us-west-002.backblazeb2.com",

credentials: {

accessKeyId: process.env.B2_KEY_ID!,

secretAccessKey: process.env.B2_APP_KEY!,

},

})

AWS S3 with @aws-sdk/client-s3

The modular v3 SDK offers tree-shaking and a consistent promise-based API:

Core Operations

import {

S3Client,

PutObjectCommand,

GetObjectCommand,

DeleteObjectCommand,

ListObjectsV2Command,

HeadObjectCommand,

CopyObjectCommand,

} from "@aws-sdk/client-s3"

import { getSignedUrl } from "@aws-sdk/s3-request-presigner"

import { Upload } from "@aws-sdk/lib-storage"

import { createReadStream, createWriteStream } from "fs"

import { Readable } from "stream"

import { pipeline } from "stream/promises"

const s3 = new S3Client({

region: process.env.AWS_REGION ?? "us-east-1",

})

const BUCKET = process.env.S3_BUCKET!

// Upload a file:

async function uploadFile(key: string, body: Buffer | Readable, contentType: string) {

await s3.send(new PutObjectCommand({

Bucket: BUCKET,

Key: key,

Body: body,

ContentType: contentType,

// Caching:

CacheControl: "public, max-age=31536000, immutable",

// Metadata:

Metadata: { uploadedBy: "pkgpulse-app" },

}))

return `https://${BUCKET}.s3.${process.env.AWS_REGION}.amazonaws.com/${key}`

}

// Download a file:

async function downloadFile(key: string, outputPath: string) {

const { Body } = await s3.send(new GetObjectCommand({ Bucket: BUCKET, Key: key }))

if (!(Body instanceof Readable)) throw new Error("Expected stream")

await pipeline(Body, createWriteStream(outputPath))

}

// Get object as buffer (small files):

async function getFileBuffer(key: string): Promise<Buffer> {

const { Body } = await s3.send(new GetObjectCommand({ Bucket: BUCKET, Key: key }))

const chunks: Uint8Array[] = []

for await (const chunk of Body as Readable) {

chunks.push(chunk)

}

return Buffer.concat(chunks)

}

// Delete:

async function deleteFile(key: string) {

await s3.send(new DeleteObjectCommand({ Bucket: BUCKET, Key: key }))

}

// List files with prefix:

async function listFiles(prefix: string) {

const { Contents } = await s3.send(new ListObjectsV2Command({

Bucket: BUCKET,

Prefix: prefix,

MaxKeys: 100,

}))

return Contents ?? []

}

// Check if file exists:

async function fileExists(key: string): Promise<boolean> {

try {

await s3.send(new HeadObjectCommand({ Bucket: BUCKET, Key: key }))

return true

} catch {

return false

}

}

Large File Uploads (Multipart)

// Use lib-storage for reliable large file uploads (automatic multipart):

async function uploadLargeFile(key: string, filePath: string) {

const upload = new Upload({

client: s3,

params: {

Bucket: BUCKET,

Key: key,

Body: createReadStream(filePath),

ContentType: "application/octet-stream",

},

queueSize: 4, // Concurrent uploads

partSize: 5 * 1024 * 1024, // 5MB parts (minimum)

})

upload.on("httpUploadProgress", (progress) => {

const percent = Math.round((progress.loaded! / progress.total!) * 100)

console.log(`Upload progress: ${percent}%`)

})

await upload.done()

}

Presigned URLs

// Generate a presigned upload URL (client uploads directly to S3 — no server proxy):

async function getPresignedUploadUrl(key: string, contentType: string) {

const command = new PutObjectCommand({

Bucket: BUCKET,

Key: key,

ContentType: contentType,

})

const url = await getSignedUrl(s3, command, { expiresIn: 3600 }) // 1 hour

return url

}

// Generate a presigned download URL (temporary access to private file):

async function getPresignedDownloadUrl(key: string, expiresInSeconds = 300) {

const command = new GetObjectCommand({

Bucket: BUCKET,

Key: key,

ResponseContentDisposition: `attachment; filename="${key.split("/").pop()}"`,

})

return getSignedUrl(s3, command, { expiresIn: expiresInSeconds })

}

// Client-side direct upload with presigned URL:

async function clientUpload(presignedUrl: string, file: File) {

const response = await fetch(presignedUrl, {

method: "PUT",

body: file,

headers: { "Content-Type": file.type },

})

if (!response.ok) throw new Error(`Upload failed: ${response.statusText}`)

}

Cloudflare R2

R2 is AWS S3-compatible with zero egress fees. Use the same @aws-sdk/client-s3 — just point it at R2:

import { S3Client, PutObjectCommand } from "@aws-sdk/client-s3"

// R2 client setup:

const r2 = new S3Client({

region: "auto",

endpoint: `https://${process.env.CLOUDFLARE_ACCOUNT_ID}.r2.cloudflarestorage.com`,

credentials: {

accessKeyId: process.env.R2_ACCESS_KEY_ID!,

secretAccessKey: process.env.R2_SECRET_ACCESS_KEY!,

},

})

// All S3 operations work the same:

await r2.send(new PutObjectCommand({

Bucket: "pkgpulse-exports",

Key: `reports/${userId}/2026-03.csv`,

Body: csvBuffer,

ContentType: "text/csv",

}))

R2 with public bucket + custom domain:

R2 can serve files publicly via a custom domain (e.g., cdn.pkgpulse.com) — completely free egress:

// R2 public URL (after configuring custom domain in Cloudflare dashboard):

function getR2PublicUrl(key: string) {

return `https://cdn.pkgpulse.com/${key}`

}

// Or via R2 dev subdomain (no custom domain needed):

function getR2DevUrl(key: string) {

return `https://pub-${process.env.R2_BUCKET_ID}.r2.dev/${key}`

}

R2 Workers integration (edge-side storage access):

// Cloudflare Worker with R2 binding — no SDK needed:

export default {

async fetch(request: Request, env: Env): Promise<Response> {

const key = new URL(request.url).pathname.slice(1)

// Direct R2 binding — extremely fast, same datacenter:

const object = await env.MY_BUCKET.get(key)

if (!object) {

return new Response("Not found", { status: 404 })

}

return new Response(object.body, {

headers: {

"Content-Type": object.httpMetadata?.contentType ?? "application/octet-stream",

"Cache-Control": "public, max-age=86400",

},

})

},

}

Backblaze B2

B2 is the most cost-effective storage. Use via S3-compatible API:

import { S3Client, PutObjectCommand } from "@aws-sdk/client-s3"

// B2 S3-compatible client:

const b2 = new S3Client({

region: process.env.B2_REGION!, // e.g., "us-west-002"

endpoint: `https://s3.${process.env.B2_REGION}.backblazeb2.com`,

credentials: {

accessKeyId: process.env.B2_KEY_ID!,

secretAccessKey: process.env.B2_APP_KEY!,

},

})

// Usage is identical to AWS S3:

await b2.send(new PutObjectCommand({

Bucket: process.env.B2_BUCKET!,

Key: "large-dataset/packages-2026.json",

Body: jsonBuffer,

ContentType: "application/json",

}))

B2 + Cloudflare CDN (Bandwidth Alliance — zero egress from B2 to CF):

Upload → B2 Storage → Cloudflare CDN → End Users

$0.006/GB Free egress Free egress

B2 is a member of Cloudflare's Bandwidth Alliance — transfers from B2 to Cloudflare are free, making B2+CF a cost-effective CDN setup.

Pricing Comparison (2026)

| Cost Factor | AWS S3 | Cloudflare R2 | Backblaze B2 |

|---|---|---|---|

| Storage | $0.023/GB | $0.015/GB | $0.006/GB |

| Egress | $0.09/GB | $0.00 | $0.01/GB (B2→CF free) |

| PUT requests | $0.005/1K | $0.0045/1K | $0.004/1K |

| GET requests | $0.0004/1K | $0.00036/1K | $0.0004/1K |

| Free tier | 5GB/month | 10GB + 1M ops | 10GB |

| $50/month (100GB stored, 500GB egress) | ~$50 | ~$2 | ~$5 |

Feature Comparison

| Feature | AWS S3 | Cloudflare R2 | Backblaze B2 |

|---|---|---|---|

| S3-compatible | ✅ Native | ✅ | ✅ |

| CDN integration | S3+CloudFront | ✅ Built-in CF | ✅ Bandwidth Alliance |

| Versioning | ✅ | ✅ | ✅ |

| Lifecycle rules | ✅ | ✅ | ✅ |

| Presigned URLs | ✅ | ✅ | ✅ |

| Event notifications | ✅ SNS/SQS | ✅ Workers | ✅ Webhooks |

| Multi-region | ✅ | ⚠️ Single region | ⚠️ Single region |

| Compliance (HIPAA, SOC2) | ✅ Extensive | ✅ | ✅ |

| Object locking (WORM) | ✅ | ✅ | ✅ |

| Edge storage (Workers) | ❌ | ✅ | ❌ |

| Server-side encryption | ✅ | ✅ AES-256 | ✅ |

When to Use Each

Choose AWS S3 if:

- You're already in the AWS ecosystem (EC2, Lambda, ECS)

- You need multi-region replication or complex lifecycle policies

- HIPAA/FERPA/PCI compliance with existing AWS Business Associate Agreements

- You need S3 Event Notifications → SQS/SNS/Lambda triggers

Choose Cloudflare R2 if:

- Egress costs are a concern (CDN-served files, user downloads, exports)

- You're already using Cloudflare (Workers, Pages, CDN)

- Zero egress is important for public assets (profile pictures, generated reports)

- You want the simplest cost structure

Choose Backblaze B2 if:

- Maximum storage cost efficiency for large data archives

- You already use Cloudflare CDN (B2→CF egress is free via Bandwidth Alliance)

- Budget is a primary constraint for cold/warm storage

- You don't need the edge compute integration of R2 Workers

Direct-Upload Architecture with Presigned URLs

A common architecture mistake is routing file uploads through your application server. When a user uploads a profile picture or report export, sending that binary data through your Node.js API wastes bandwidth, increases server memory pressure, and extends upload latency. The correct pattern is presigned URL direct upload: your server generates a short-lived signed URL, the client uploads directly to S3/R2/B2 using that URL, and your server records the object key after the upload completes.

This pattern works identically across all three providers because they share the same @aws-sdk/s3-request-presigner interface. The presigned PutObject URL encodes the target bucket, key, content type, and expiration time into the URL signature. The client sends a PUT request directly to the storage endpoint — no server proxy required. For browser-based uploads, you need to configure the bucket's CORS policy to allow PUT requests from your application's origin. AWS S3, R2, and B2 all support CORS configuration, though the setup UI differs. R2's CORS configuration is done via the Cloudflare dashboard or Wrangler CLI, B2's via the B2 console or API, and S3's via the AWS console or CloudFormation.

The presigned URL approach also enables upload progress tracking without streaming through your server. The browser's fetch API with a ReadableStream body can report bytes transferred to a progress bar, and Cloudflare's Durable Objects can be used with R2 to track upload state at the edge for applications that need server-side upload progress monitoring.

Disaster Recovery and Data Durability

All three providers offer 11 nines (99.999999999%) of object durability — they all replicate data across multiple availability zones and perform regular integrity checks. The meaningful differences are in recovery time objectives and cross-region options, which matter for regulated industries and enterprises with SLA requirements.

AWS S3 has the most complete disaster recovery story: Cross-Region Replication (CRR) automatically copies objects to a bucket in another AWS region, enabling recovery from a full regional outage. S3 Versioning preserves every version of every object, so accidental deletion or overwrite is reversible. S3 Object Lock (WORM mode) satisfies compliance requirements like SEC Rule 17a-4 that require immutable audit logs. These features are mature and well-documented, but each adds cost — CRR doubles your storage and incurs cross-region data transfer fees.

Cloudflare R2's replication model is handled at the Cloudflare network level rather than by the user. R2 stores data redundantly across multiple data centers within a region, but cross-region redundancy is not user-configurable as of 2026 — Cloudflare manages geographic distribution as a platform decision. For most applications this is acceptable; R2's uptime has been excellent since GA. For applications requiring explicit multi-region compliance (e.g., GDPR data residency in specific EU locations), R2's single-region-per-bucket model requires careful bucket placement. Backblaze B2 similarly offers single-region storage with multiple internal copies, and their published SLA matches AWS S3's 99.99% availability target.

Security, Access Control, and Compliance Considerations

Object storage security varies significantly across the three providers in ways that matter for applications handling sensitive data. AWS S3's IAM system is the most granular — you can restrict access by IP range, time of day, VPC endpoint, and even specific S3 API actions (distinguishing between PutObject and DeleteObject permissions). For regulated environments, S3's integration with AWS Config, CloudTrail, and GuardDuty provides audit trails that satisfy SOC 2, HIPAA, and PCI DSS requirements out of the box.

Cloudflare R2's access control is simpler but sufficient for most applications. R2 tokens are scoped to specific buckets and can be read-only, write-only, or read-write. R2 does not have the IAM depth of AWS S3, but for most SaaS applications — where access control is enforced at the application layer and the storage layer is a trusted backend service — this simplicity is an advantage. R2's default encryption at rest (AES-256) matches AWS S3's standard encryption, and R2's compliance certifications include SOC 2 Type II.

Backblaze B2's application key system provides bucket-level scoping and prefix-level restrictions — you can create a key that can only write to uploads/ within a bucket. B2 does not have the policy-language flexibility of AWS IAM, but its security model is straightforward to audit and verify. B2 holds SOC 2 Type II certification and supports server-side encryption with either B2-managed keys (SSE-B2) or customer-managed keys (SSE-C). For teams whose security requirements are moderate — protecting data at rest and in transit, but not requiring fine-grained per-action IAM policies — B2's simpler model reduces configuration errors compared to AWS S3's more powerful but more complex IAM.

Methodology

Pricing from official provider documentation (March 2026). Feature comparison based on @aws-sdk/client-s3 v3.x, Cloudflare R2 API documentation, and Backblaze B2 S3-compatible API documentation. Cost calculations use public pricing tiers.

Compare cloud and storage package ecosystems on PkgPulse →

See also: ipx vs @vercel/og vs satori 2026 and unenv vs edge-runtime vs @cloudflare/workers-types, acorn vs @babel/parser vs espree.